Emi Nakamura explores the macroeconomy by examining massive databases at their most granular level and finding patterns that elude others.

To determine price behavior in periods of high inflation, for example, she used retrofitted scanners to pore over drawers of long-neglected microfilm at the Bureau of Labor Statistics. The project took 10 years, but generated an incredibly rich picture of long-term price dynamics.

Emi Nakamura

Chancellor’s Professor of Economics

University of California, Berkeley

- Winner, 2019 John Bates Clark Medal, awarded to American economist under the age of 40 who made the most significant contribution to economic thought and knowledge

- Member, American Academy of Arts and Sciences, 2019

- Daughter of economists Alice Nakamura and Masao Nakamura, granddaughter of economist Guy Orcutt

- Ph.D., Harvard, 2007, dissertation: “Price Adjustment, Pass-Through and Monetary Policy”

Nakamura’s arduous, inventive pursuit of data is paired with novel methodological approaches. The University of California, Berkeley, economist comes at questions from ingenious angles, enabling her to test competing schools of thought with precision and clarity. Her discoveries have overturned some of economics’ oldest theories and confirmed others—moving the discipline’s discussion to a higher plane.

Nakamura has “increase[d] … our understanding of some of the most consequential, challenging, and long-standing questions in macroeconomics,” noted the American Economic Association, in awarding her the 2019 John Bates Clark medal, given annually to the most promising American economist under 40. “Emi’s research has been transformative.”

The Clark is just one award of many that attest to her colleagues’ high regard. She received the Eccles Research Award in 2015 from Columbia University, the Elaine Bennett Research Prize in 2014 (also from the AEA), and “Top 25 Economists under 45” recognition from the International Monetary Fund in 2014.

Most of her work centers on the U.S. macroeconomy. She recently studied trends in U.S. female labor force participation and slow employment growth after the Great Recession, and the housing wealth effect in the United States. Some of her earliest papers analyzed cost pass-through in the U.S. coffee market.

But she’s looked internationally, as well, at Chinese growth and inflation data, for instance, questioning whether the economy expanded as smoothly as reported. She studied Japan’s economy in the 1980s and ’90s, in papers co-authored with her parents, also economists. With her husband, economist Jón Steinsson, a frequent co-author, she and another colleague examined how residents of an Icelandic island fared decades after a major volcanic eruption in 1973 forced their evacuation.

COVID-19 promises to change her research agenda significantly. She’s regularly engaged now in international discussions with colleagues about how best to understand the pandemic’s evolution and curtail its devastation. But whatever its consequences, the disease won’t dull her curiosity about broad economic problems or her creativity in addressing them.

Interview conducted on March 24, 2020

Shocks and propagation

Douglas Clement: First of all, thank you so much. Your time is tight in the best of times, and these are not the best of times.

Emi Nakamura: I was just on a call this morning with a group of economists discussing policy options in the current circumstances. It’s a scary moment in history. I thought the Great Recession that started in 2007 was going to be the big macroeconomic event of my lifetime, but here we are again, little more than a decade later.

And it would be natural to spend our entire conversation on it. I definitely want to hear your thoughts on the pandemic, but because it would provide valuable context for them, I’d like to first focus on your research and then come back.

More than other recessions, this particular episode feels like it fits into the classic macroeconomic framework of dividing things into “shocks” and “propagation”—mainly because in this case, it’s blindingly clear what the shock is and that it is completely unrelated to other forces in the economy. In the financial crisis, there was much more of a question as to whether things were building up in the previous decade—such as debt and a housing bubble—that eventually came to a head in the crisis. But here that’s clearly not the case.

There’s a possibility in this case that at some point, the shock completely disappears, say, if we get a vaccine or an effective treatment. In that case, the remaining effects will be a pure measure of the propagation effects of the initial shock, which may still be substantial.

Fiscal multiplier

Let’s begin with your work on the so-called fiscal multiplier—something that may be relevant to fiscal policy responses to the new recession. The impact of government spending on aggregate economic activity has long been debated by economists. Some say that it simply “crowds out” private spending, basically negating any impact it might have. Others estimate substantial benefit and advocate the use of fiscal stimulus to counteract economic downturns, as during the Great Recession.

You measured the impact of federal military spending on individual state economies and came up with a substantial fiscal multiplier.

Could you tell us a bit about that research and explain how it might—or might not—be relevant to the likely increase in federal spending we’ll be seeing in coming months?

Jón [Steinsson] and I studied the effects of fiscal stimulus using the differential impact of military spending on different states in the United States using very detailed military procurement data. For example, in the Carter-Reagan military buildup, there was a big increase in government spending for the country as a whole on average. But the effect falls much more on California—basically because they build airplanes—than it does on Illinois, where basically nothing happens in terms of defense spending. So this provides a natural experiment. We ask whether this translates into a bigger increase in output in California versus Illinois.

Now, there is an issue of interpretation here because we’re measuring this relative multiplier: what happens when government spending increases in State A versus State B. And it’s not totally clear that you can translate that into an aggregate multiplier, where you’re asking what happens if government spending increases in the country as a whole. There are general equilibrium effects that might push back at the national level that might not arise if you’re comparing one state versus another state. We use a model to map the evidence at the regional level to inform policy questions about the effects of fiscal policy at a national level.

Now let me turn to the current situation. While a lot of the proposed spending bills are labeled as “stimulus,” in some ways that’s a misnomer. The idea behind traditional Keynesian fiscal stimulus is that you’re trying to get people to spend and work. This, in turn, leads to more production and more employment. That’s how one can in principle get a fiscal multiplier above one—if not only the government spending doesn’t crowd out other production, but it actually results in even more production when those earning income from producing the goods demanded by the government spend their extra income producing even more demand for goods.

In this case, the situation is very different. Many people literally can’t work and can’t spend. The service sector accounts for a huge fraction of the economy and, right now, many of the workers in that industry—say, hairdressers—are literally forbidden to work. It’s also hard to buy things! Many stores, other than food stores, are also closed. Online sellers like Amazon and online grocers like Instacart are overwhelmed, and it’s hard to get a delivery.

So, even though the policies are called stimulus policies, it’s a very different situation since there are a lot of barriers—for good reason—to both working and spending. Of course, if these barriers get lifted, then perhaps the current policies will start to operate more like traditional stimulus.

Price rigidity

Let’s turn to some of your first research, on price dynamics. In your widely cited 2008 paper, “Five Facts About Prices,” you examine many dimensions of pricing, from heterogeneity of price changes among different products to the role of sale prices versus regular prices and the role of product turnover to differences in frequency of changes in consumer prices compared to producer prices.

Your most striking finding was the level of price rigidity—the fact that prices change every eight to 11 months on average, which is far less frequent than Pete Klenow and Mark Bils had found in earlier work.

At a theoretical level, why is this lack of price flexibility important for understanding the impact of demand shocks or monetary policy? And, at a practical level, why do firms have trouble changing prices?

When there is a negative shock to consumer demand, when consumers start spending less for some reason—say, the value of their house falls or a pandemic happens or something like that—then two things could happen.

One is that quantities of spending could fall, so people buy less. But another is that prices could fall. And, to some extent, that could offset the effects of the reduction in demand. On the one hand, people inherently want to buy less because of this outside reason. But on the other hand, some of that effect is buffered by the fact that prices may temporarily be lower.

If prices fall a lot, that would help stabilize the effect on employment and how much is being produced in the economy. The extent to which prices play this stabilizing or buffering role is one of the oldest questions in macroeconomics. In Keynesian models, the prices just don’t adjust very rapidly. As a consequence, prices don’t play the kind of buffering role that they do in neoclassical models, in which the price system functions very efficiently.

In neoclassical theory, prices adjust right away to shocks, so markets clear quickly.

Yes, whereas in a Keynesian model, even a massive reduction (or massive increase) in demand may not result in very much of a price response. As a consequence, you see a massive reduction in the amount that’s actually produced, in the number of people working, and so on.

And this is also true for monetary policy?

Absolutely. The simplest example is the case where the money supply doubles. If the amount of money in the economy doubles, and all the prices in the economy also double, then conceptually, nothing has actually changed. It’s like if the United States switched from the Imperial system to the metric system. Even though people would get taller in some sense—“taller” in centimeters than in inches—obviously, nothing would literally change with people’s heights.

Great analogy. And you were able to document in your research that prices are rigid?

Yes, exactly. You might think that it’s very easy to go out there and figure out how much rigidity there is in prices. But the reality was that at least until 20 years ago, it was pretty hard to get broad-based price data. In principle, you could go into any store and see what the prices were, but the data just weren’t available to researchers tabulated in a systematic way.

There was an important paper by Mark Bils and Pete Klenow—the one you referred to earlier—for which the authors managed to convince the Bureau of Labor Statistics to let them study price rigidity in the data underlying the U.S. consumer price index. Their work was important because it gave a look at a really broad-based cross-section of products. Previously, much of what had been studied in the economics literature were prices for individual goods like newspapers. But there was the question of whether those goods were unusual or typical of all goods.

Once macroeconomists started looking at data for this broad cross section of goods, it was obvious that pricing behavior was a lot more complicated in the real world than had been assumed. If you look at, say, soft drink prices, they change all the time. But the question macroeconomists want to answer is more nuanced. We know that Coke and Pepsi go on sale a lot. But is that really a response to macroeconomic phenomena, or is that something that is, in some sense, on autopilot or preprogrammed?

Another question is: When you see a price change, is it a response, in some sense, to macroeconomic conditions? We found that, often, the price is simply going back to exactly the same price as before the sale. That suggests that the responsiveness to macroeconomic conditions associated with these sales was fairly limited.

One of my papers with Jón is with Ben Malin at the Minneapolis Fed and two marketing professors, Eric Anderson and Duncan Simester. We were able to learn more about institutional features of price-setting in this project. For instance, in a lot of cases, it’s essentially trade promotions calendars that determine the timing of these sales, and these calendars are agreed on substantially in advance.

One of the things that’s been very striking to me in the recent period of the COVID-19 crisis is that even with incredible runs on grocery products, when I order my online groceries, there are still things on sale. Even with a shock as big as the COVID shock, my guess is that these things take time to adjust.

One thing that’s important to recognize is that price rigidity doesn’t just change how the economy responds to demand shocks, say, because of government spending or consumer optimism, it also changes how the economy responds to classic supply shocks, such as productivity shocks. The COVID-19 crisis can be viewed as a prime example of the kind of negative productivity shock that neoclassical economists have traditionally focused on. But an economy with price rigidity responds much less efficiently to that kind of an adverse shock than if prices and wages were continuously adjusting in an optimal way.

Effectiveness of monetary policy

Let’s move to a topic closely related to price rigidity: the effectiveness of monetary policy. Price rigidity implies that monetary policy is going to be “nonneutral”—that changing interest rates will have an effect on aggregate economic activity, as central banks intend. In your 2010 paper on monetary nonneutrality, you established that, indeed, that is the case.

But in a 2016 paper, you found that forward guidance on monetary policy seemed less effective than many, including Michael Woodford at Columbia—your adviser in college—and former Chair Ben Bernanke, have suggested. And then your 2018 paper about high-frequency identification of news about monetary policy actions showed that policy announcements can actually be counterproductive, that news of monetary tightening, for example, can lead to economic expansion.

It leaves me puzzled about monetary policy effectiveness. What forms can be effective? Why aren’t others?

Our early work, the 2010 paper, was working within a standard model to answer the question of what the new findings about the frequency of price change implied about the effectiveness of monetary policy.

The key thing we were trying to integrate into the model was the enormous variation in how often prices change. Even though, on average, prices change a lot, the price changes are concentrated very heavily in particular sectors of the economy. Gasoline prices change all the time, for example, as do airline ticket prices. But then there are massive parts of the economy, like services, where prices change quite infrequently—more like once a year. Also, while sales are very common in, say, soft drinks, they aren’t common at all for haircuts or meals in restaurants.

This enormous amount of heterogeneity in pricing behavior across different sectors of the economy means that even if, on average, there are a lot of price changes, the price changes aren’t very “effective.” So monetary policy can have big effects, because the flexibility is lumped together in particular sectors of the economy.

The other thing we wanted to integrate is interdependencies—production chains that cross over different parts of the economy. That means you can’t think of different economic sectors as independent of one another.

So you introduced not just price heterogeneity, but also intermediate inputs—input-output linkages.

Right, exactly. We showed that augmenting the basic monetary model with these two features—that are definitely in the data—meant that monetary policy had much larger effects.

But the standard model has another feature that we hadn’t thought much about at the time, which is that the economy responds very strongly to interest rate changes that are very far out in time. This has been referred to as the “forward guidance puzzle” in the literature because it’s really quite unintuitive and surprising.

To give you a sense of this, suppose somebody tells you that interest rates are going to be a percentage point lower for one quarter five years from now. It turns out that in the model, this kind of “forward guidance” about interest rates can have a larger effect on the economy than if the Fed lowers interest rates by one percentage point today.

But if you say to most economists, as a thought experiment: “What would you do differently if you knew that interest rates were going to be one percentage point lower 10 years from now?” the typical reaction is, “I would do nothing.” So it’s quite surprising even to economists that this standard model implies this large response.

We spent a lot of time thinking about where these features of the model came from. Our first hypothesis was that it had to do with the Keynesian features of the model. But we eventually realized the central problem was the basic model of consumption shared by most macroeconomic models, as opposed to the Keynesian features.

In a standard model, people’s savings decisions are completely dominated by their thinking about interest rates, so if interest rates are going to be a little bit higher in the future, they should save a little bit more. If they’re going to be a little bit lower in the future, they should spend a little bit more. This is called the intertemporal substitution motive.

But in our paper, we’re arguing that that motive may actually be quite small.

The relevant line from your paper, I think, is, “The precautionary savings effect counteracts the standard intertemporal substitution motive.”

Right. In reality, a lot of people are thinking more about building up a sufficient buffer stock of savings in their bank account than they are about interest rates. When something really bad happens—say, you lose your job, as many are right now—then it matters a lot to have a buffer stock of savings.

It turns out that if this is what dominates people’s motive to save, then announcements about interest rates far in the future are much less powerful. In this sense, the results of the model line up much more with people’s intuitions.

I don’t think that our paper is the end of the story, by any means. There are a number of explanations for why forward guidance would be less effective than it is in these simple models. For example, another reason is how and even whether people process information about announcements about future interest rates.

This is likely to be a continuing conversation. Forward guidance has actually been going on for a long time, at least since the 1990s. But this has really come to the center of monetary economics in the recent period, as current interest rates have often been close to zero. As it turned out, we only had a brief period between the last recession and the current one when interest rates were above zero. In this kind of environment, when everyone knows current interest rates will be close to zero for some time, understanding forward guidance—announcements about where future interest rates are going to be—will continue to be a central part of the monetary policy arsenal.

The “information effect”

That brings us to the most recent paper, your 2018 article on the “information effect” of monetary policy shocks.

This paper really grew out of our attempts to estimate the effects of monetary policy using a “high-frequency” approach. The basic challenge in estimating the effects of monetary policy is that most monetary policy announcements happen for a reason.

For example, the Fed has just lowered interest rates by a historic amount. Obviously, this was not a random event. It happened because of this massively negative economic news. When you’re trying to estimate the consequences of a monetary policy shock, the big challenge is that you don’t really have randomized experiments, so establishing causality is difficult.

Looking at interest rate movements at the exact time of monetary policy announcements is a way of estimating the pure effect of the monetary policy action.

And that’s why you look at a 30-minute window around the announcement.

Yes, exactly. Intuitively, we’re trying to get as close as possible to a randomized experiment. Before the monetary policy announcement, people already know if, say, negative news has come out about the economy.

The only new thing that they’re learning in these 30 minutes of the announcement is how the Fed actually chooses to respond. Perhaps the Fed interprets the data a little bit more optimistically or pessimistically than the private sector. Perhaps their outlook is a little more hawkish on inflation. Those are the things that market participants are learning about at the time of the announcement.

The idea is to isolate the effects of the monetary policy announcement from the effects of all the macroeconomic news that preceded it. Of course, you have to have very high-frequency data to do this, and most of this comes from financial markets. We are using data on nominal and real interest rates—the latter is measured from Treasury Inflation-Protected Securities (TIPS) yields. The difference between the two lets us measure the change in people’s inflation expectations to expansionary and contractionary monetary policy shocks.

Did the results surprise you?

The results completely surprised us. The conventional view of monetary policy is that if the Fed unexpectedly lowers interest rates, this will increase expected inflation. But we found that this response was extremely muted, particularly in the short run. The financial markets seemed to believe in a hyper hyper-Keynesian view of the economy. Even in response to a significant expansionary monetary shock, there was very little response priced into bond markets of a change in expected inflation.

The first version of this paper just reported these results and noted that it implied, basically, a very flat Phillips curve—that is, very little trade-off between inflation and unemployment.

But, then, we were presenting the paper in England, and I recall that Marco Bassetto asked us to run one more regression looking at how forecasts by professional forecasters of GDP growth responded to monetary shocks. The conventional view would be that an expansionary monetary policy shock would yield forecasts of higher growth.

When we ran the regression, the results actually went in the opposite direction from what we were expecting! An expansionary monetary shock was actually associated with a decrease in growth expectations, not the reverse! So we had to rewrite the paper.

And your idea is that the market reacts in that unintuitive way because the announcement itself conveys information about what the Fed was thinking about the economy.

Yes, exactly. At first, we felt very skeptical about this result because the Fed doesn’t really have very much differential private data about the state of the economy. Perhaps they get to see industrial production a little bit early. But for the most part, they have the same data as everyone else.

Eventually, we came to the view, however, that while it’s true that they don’t have a lot of differential data relative to the private sector, they do carry out an independent set of analyses. And research in the finance literature has shown that when analysts or others make announcements, even based on public data, they seem to move markets.

From this perspective, our regression results don’t seem so crazy. When Jay Powell or Janet Yellen or Ben Bernanke says, for example, “The economy is really in a crisis. We think we need to lower interest rates,” the private sector thinks they can learn something from that.

It’s not necessarily a statement that the private sector knows less than the Fed or that the Fed knows less than the private sector. It’s just saying that perhaps the private sector thinks they can learn something about the fundamentals of the economy from the Fed’s announcements. This can explain why a big, unexpected reduction in interest rates could actually have a negative, as opposed to a positive, effect on those expectations.

Elusive costs of inflation

Let’s turn to the costs of inflation. Monetary policymakers are usually concerned about the so-called Phillips curve trade-off between inflation and unemployment that you just mentioned. And since the ’80s, the focus really has been on preventing inflation. The extreme inflation of the 1970s taught the Fed to guard very aggressively against another such episode.

But your 2018 paper concluded that concerns about the damage wrought by inflation have perhaps been exaggerated. And, in part, that’s because regular prices change more frequently in high-inflation environments. Can you elaborate on that research and how you reach that conclusion?

Absolutely. A long-standing idea about the costs of inflation is that a lot of inflation could lead to inefficiencies in the basic functioning of the price system associated with price dispersion. Relative prices might get distorted simply because one firm chooses to wait one more month to adjust its prices than another firm. This could be quite costly from the perspective of Adam Smith’s notion of the invisible hand allocating production efficiently across the economy. In this scenario, relative prices aren’t really providing the right signal to consumers in terms of what to buy and firms in terms of what to produce.

We looked at the behavior of prices during the U.S. great inflation right around 1980 to try to empirically study how much relative prices were affected by periods of high inflation. This was somewhat hard to do in practice because the existing price data underlying the CPI only went back to 1988. So this project involved going back to microfilm that we discovered at the Bureau of Labor Statistics to reconstruct what was going on with prices at the time of the great inflation.

Recovering these data was actually quite an odyssey. We first learned of the existence of these data from some of the older staff at the Bureau of Labor Statistics, who pointed us to some filing cabinets where some really old microfilm cartridges were stored. Actually recovering the data took us a decade, largely because the BLS is so careful about their data and won’t let it leave the building, for privacy reasons, even when the data are several decades old. The project involved finding microfilm readers that could be retrofitted for the very old cartridges that the BLS had and then finding a company that could help us build software to convert the scanned images into machine-readable data. But eventually we were able to construct this data set on prices going back to the late 1970s.

We expected that as inflation rose—to over 10 percent during the period of the great inflation—that we would see some increase in price dispersion. That’s the kind of classic cost of inflation that economists talk about; it’s embedded into standard monetary models. We thought we would find evidence that relative prices were distorted by high inflation, because of firms failing to adjust their prices in a synchronized way.

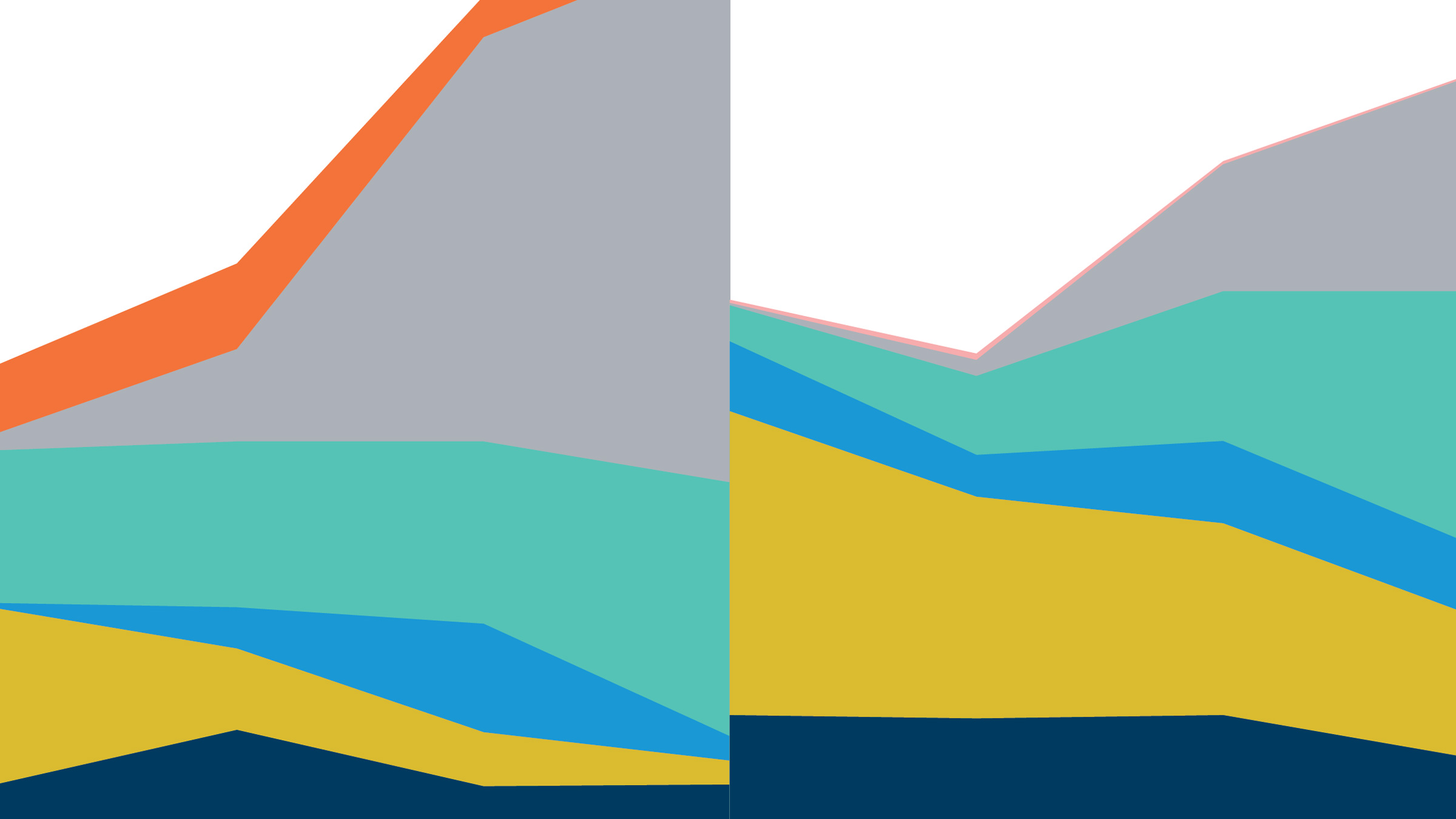

What we actually found was, essentially, no increase at all in our proxy for price dispersion. Intuitively, what we found was that the frequency of price change almost doubled during this period, and that was almost enough to mean that the higher inflation has no effect on the functioning of the invisible hand in the economy. So the conclusion from our analysis is that this particular channel—the cost of inflation having to do with price dispersion—doesn’t seem to have been very important empirically during this period.

Now, that said, I want to emphasize that we had a very narrow focus in this project in trying to look at the costs of inflation that we could directly measure, particularly those having to do with price dispersion. There might be a lot of other costs of inflation that we don’t model. For example, costs having to do with the fact that high inflation redistributes wealth from savers to borrowers. And costs due to people not accurately perceiving how inflation affects their real income or wealth over time, that might adversely affect their financial planning.

What we would conclude from our analysis is that this conventional channel of price dispersion really isn’t that important empirically, and to the extent that we want to think of inflation having very large costs, we really need to focus our attention on the other potential channels.

This debate is likely to continue in the coming decade for the same reason that I think forward guidance will continue to be a big topic in monetary economics. With interest rates very low for a long time, one of the main policy debates, and something that the Fed is openly discussing, is whether the two percent inflation target makes sense, or whether there should be some consideration of raising the inflation target. From that perspective, thinking about the costs of inflation is important.

Consequences of COVID-19

Let’s turn to the pandemic and its economic consequences. Much of your research, of course, is about shocks to the economy and policy responses to them. What does it tell us about the effects of the COVID-19 shock and possible policy responses?

Actually, our paper on the “plucking model” tries to document and understand several features of the unemployment rate, including one that may be relevant to the current situation. A feature emphasized by Milton Friedman is that the unemployment rate doesn’t really look like a series that fluctuates symmetrically around an equilibrium “natural rate” of unemployment. It looks more like the “natural rate” is a lower bound on unemployment and that unemployment periodically gets “plucked” upward from this level by adverse shocks. Certainly, the current recession feels like an example of this phenomenon.

Another thing we emphasize is that if you look at the unemployment series, it appears incredibly smooth and persistent. When unemployment starts to rise, on average, it takes a long time to get back to where it was before.

This is something that isn’t well explained by the current generation of macroeconomic models of unemployment, but it’s clearly front and center in terms of many economists’ thinking about the policy responses [to COVID-19]. A lot of the policy discussions have to do with trying to preserve links between workers and firms, and my sense is the goal here is to avoid the kind of persistent changes in unemployment that we’ve seen in other recessions.

In terms of other policies, we have just seen (March 24), a huge monetary policy response to this event, and we’ve seen a big response on the fiscal side as well. In certain ways, I think, [the monetary policy and fiscal] responses we’ve seen are part of the conventional arsenal of Keynesian stabilization tools, although the situation is quite different, as we discussed earlier.

I feel that the experience of the last recession has at least given us a framework to think about creative tools in both monetary and fiscal policy, and that’s a very good thing. Perhaps we’re more likely to see rapid action as a consequence of that.

On the other hand, the last recession also gave us much higher levels of debt. So clearly, one of the features of the discussion right now is the extent to which a large fiscal stimulus is sustainable in view of the much higher levels of debt we have today than in the last recession.

We’ll close on that sobering observation. Thank you very much.